Overview

This page is based on the original PDF paper, Polynomial computer algorithm performance complexity analysis of O(n · log(n)). Sub-zero field of positive infinities, published in August 2022.

The paper argues that the practical difference between O(n log n) and O(n) is often overstated, especially when algorithms are evaluated against real computer architectures with finite register sizes. It also proposes a more compact polynomial notation for comparing complexity classes and extends that idea into a broader discussion about different "scales" of infinity.

Main Thesis

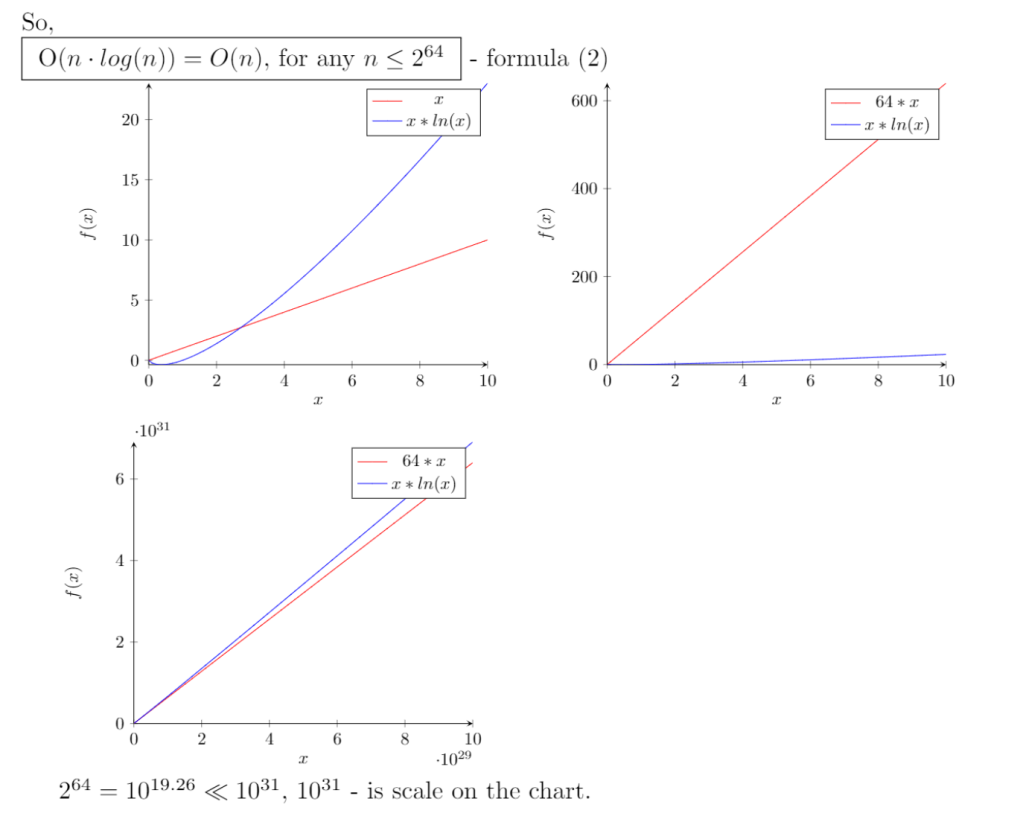

The core claim of the paper is that the gap between O(n log n) and O(n) can be treated as insignificant in many engineering contexts.

The argument is presented from three directions:

- A practical hardware-bounded view, where the largest usable input size is constrained by machine architecture.

- A future-scaling view, where hardware generations grow but the logarithmic factor still remains comparatively weak.

- A mathematical view, where the paper argues that the logarithmic term behaves close enough to a constant for large-scale comparison.

In that framing, the article concludes that engineers often make reasonable decisions when they prioritize broader complexity classes and practical constraints over fine-grained distinctions like n versus n log n.

Architecture-Bounded Reasoning

One of the paper's main examples uses register sizes of historical and modern architectures such as 8-bit, 16-bit, 32-bit, and 64-bit systems.

The idea is straightforward:

- if the machine bounds the maximum representable integer,

- then the practical range of n is also bounded,

- and within that bounded range the log(n) factor grows slowly enough to be treated like a constant multiplier.

Using that reasoning, the paper rewrites O(n log n) as effectively linear under fixed architectural limits, with x64 used as the strongest practical example.

Polynomial Notation

The paper then suggests a simplified way to compare common complexity classes using polynomial exponents.

Examples from the article include:

- O(1) as O(n^0)

- O(log n) as O(n^(0+e))

- O(n) as O(n^1)

- O(n log n) as O(n^(1+e))

Here, e represents an arbitrarily small positive factor approaching zero. The intent is to visually place nearby growth rates closer together, making it easier to communicate when two complexities are practically similar even if they are not identical in standard asymptotic notation.

Sub-Zero Field of Positive Infinities

The addendum of the paper moves beyond algorithm analysis into a more speculative mathematical idea called the sub-zero field.

This section argues that when functions are compared on larger and larger polynomial scales:

- logarithmic growth can look almost constant,

- linear growth can look almost zero when compared with quadratic growth,

- and negative polynomial powers can be used to describe transformations into a region "below" the usual zero threshold of positive growth rates.

The article uses this model to discuss how different infinities may be compared more granularly than with ordinary informal usage, drawing loose parallels to Cantor-style distinctions between infinite sets.

Conclusion

The paper's conclusion is not that classical complexity notation is useless, but that it may be too coarse for one kind of comparison and too fine for another.

Its practical recommendation is:

- for many software engineering decisions, the difference between O(n) and O(n log n) may not justify much design overhead,

- polynomial-style notation can make relative growth easier to reason about,

- and discussions about infinity can also be framed through growth-rate powers rather than only through traditional labels.

For the full mathematical derivations, examples, and references, see the original PDF: