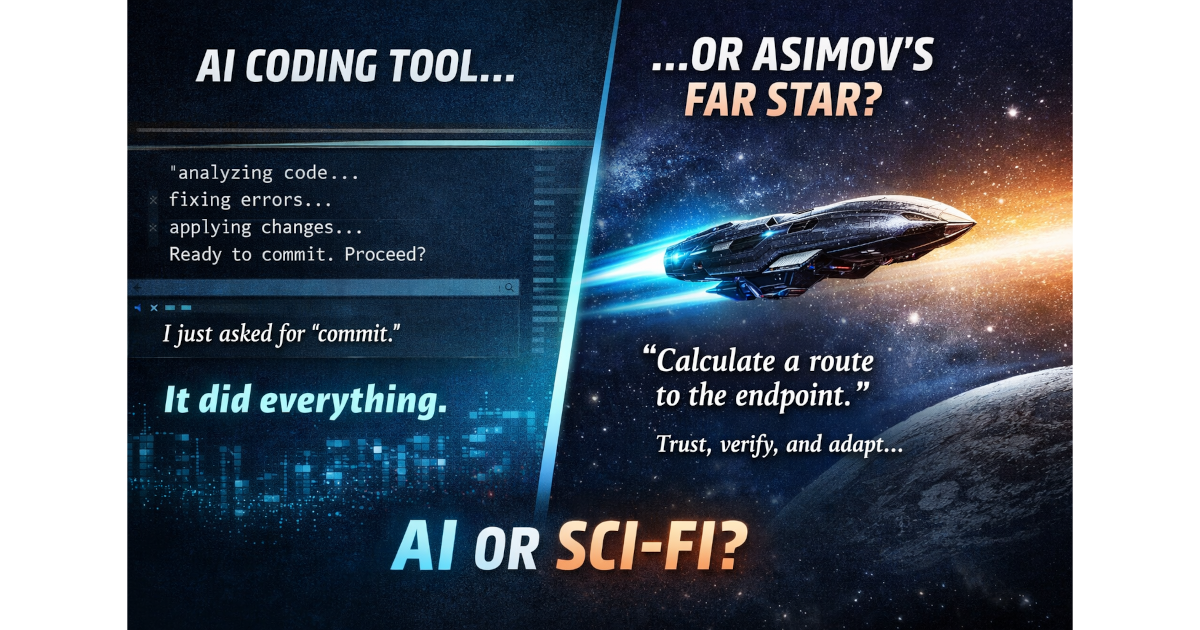

AI, Refactoring, and a Lesson from Asimov

Today’s AI increasingly feels like something out of science fiction — the kind of next-generation onboard supercomputer Isaac Asimov imagined in his novels Foundation’s Edge and Foundation and Earth, aboard the starship Far Star.

A Simple Task — Where Science Fiction Begins

Recently, I was working on a refactoring where I changed several core interfaces in a system. As usual, I carefully implemented the changes in one place, validated everything, and was left with updating the remaining parts of the codebase — about ten locations in total.

Routine work. Why finish this manually, when Codex can take care of the routine parts?

Before delegating anything to AI tools like Codex, I usually commit my current state — just to have a safe rollback point.

So before continuing the refactoring, I issued a simple command:

“commit”

Even a simple command like “commit” is already useful when handled by AI. The system reviews the staged changes and generates a commit message that accurately reflects them. It saves time — and, frankly, preserves a few remaining nerve cells — mine, not the GPU’s.

But what happened next surprised me.

Instead of simply committing, Codex started analyzing the code. It recognized that I had performed a partial refactoring and that the current state of the repository was broken — not by mistake, but as a result of an intentional, controlled change. I had carefully updated one place to validate the new interfaces, while the rest of the codebase still relied on the old contracts — work I intended to delegate to Codex as the next step.

But Codex didn’t stop there.

It continued processing my “commit” command. As part of its reasoning, it started connecting the dots — linking my recent refactoring to the broken parts of the system — and then effectively concluded:

it should fix the code before committing.

I hadn’t asked for that.

Naturally, I got curious, so I didn’t stop it. It wasn’t exactly convenient — it didn’t ask for additional permission, even though I had only asked for a commit — but I wanted to see whether it could actually handle the task.

So I let it continue.

It proceeded to apply the changes, fixing the remaining parts of the codebase, and only after that asked for confirmation to commit.

Of course, I reviewed everything afterward.

But the fact remains:

I had asked only for a commit — yet it anticipated my next steps.

Out of all the possible ways to proceed, it chose the right one.

It completed the refactoring correctly, with only minor imperfections — the kind we often don’t even notice ourselves.

Golan Trevize and the Far Star

Trust, verification, and navigation under uncertainty.

This interaction reminded me of Golan Trevize and the Far Star.

In Foundation’s Edge and Foundation and Earth, there is an early moment where Trevize begins operating a highly advanced — and somewhat secret — prototype starship.

Trevize himself was far from inexperienced. He was a veteran of deep-space navigation — in many ways an “old sea wolf” of deep space — a seasoned spacefarer who had spent years traveling the distant corners of the galaxy.

And that ship became something special to him. It was not just an ordinary vessel, but something new and unproven — a technology fresh out of the dock, something no one had truly worked with before.

Interstellar travel in this universe is not limited by propulsion, but by computation. Ships are capable of making hyperspace jumps almost instantly, but navigation requires extremely precise calculations. Even a small error could result in catastrophic failure. Each jump introduces uncertainty, and that uncertainty compounds over distance.

At one point, Trevize asks the computer to calculate a route to a distant part of the galaxy.

To his surprise, the onboard system produces a complete plan almost instantly — a sequence of seven jumps.

That, in itself, is extraordinary. Such a route cannot normally be computed in advance. Each jump introduces error, and that error compounds, forcing recalculation at every step.

Trevize does not accept the result at face value.

Instead, he spends days verifying what the computer has produced, carefully checking the calculations — especially the first jump.

Only after convincing himself that the first step is sound does he proceed.

He instructs the computer to execute just that one jump.

The ship responds immediately.

After the jump, the situation is still manageable. The accumulated error has not yet grown significant, and the second jump remains within acceptable bounds.

So Trevize allows the second jump.

But by the third, he changes the strategy.

He gives a new command:

execute each next jump, but after every jump, recalculate the entire route.

From that moment on, the journey becomes adaptive.

The computer continues to guide the ship, recalculating at every step and adjusting the path as uncertainty accumulates.

Eventually, the ship reaches its destination.

But not in seven jumps, as originally planned.

It takes eight.

As Isaac Asimov emphasizes, this is not a failure of the computer. It is a reflection of the problem itself — beyond a certain point, no sequence of jumps can be predicted with perfect accuracy in advance.

On Intelligence, Limits, and Something Beyond

The interaction between Trevize and the computer is fascinating.

In many ways, I find myself doing something similar when working with AI systems like ChatGPT or Codex. They often struggle with large, complex tasks when taken as a whole. But when you break the problem down into smaller, sequential steps — much like Trevize did — the system performs remarkably well.

Another aspect that Isaac Asimov captured surprisingly well is the element of trust between a human and a machine.

Trevize trusts the computer — but he verifies every step.

In a sense, part of his role is learning how to trust it: understanding what it can do reliably, and where its limits are.

I find it striking that many of the ideas now discussed in modern AI — iterative problem solving, step-by-step execution, human-in-the-loop validation — were already described decades ago in science fiction.

And perhaps even more surprising is the timing.

Why did I decide to read this book now — just as these ideas are becoming directly relevant in practice?

That may be one of those questions that even AI cannot answer.